Overview: Technology is allowing communities to both understand the scale and the nature of racial inequity in their midst. By improving access to technology, developing more effective technology, and using it to drive policy, they’re beginning to address long-standing racial issues.

Is Technology Biased?

One of the most uncomfortable discoveries in the early years of machine learning was that long-standing social biases emerged from data. A University of Virginia professor, for example, discovered that image recognition software was drawing sexist conclusions based on the initial dataset it was fed. It was, quite literally, deciding only women belonged in kitchens.

In government innovation, this sounded like a note of caution. While machine learning and data science were being embraced with enthusiasm, these were reminders that biases were built into data sets and needed to be compensated for.

Over time, though, this has turned towards using data to locate bias and issues within communities. An analysis of cellphone location data by the Boston Globe. For example, found that the city’s history of segregation lingered in where people went during the day. In particular, “triple disadvantaged neighborhoods,” areas where people from historically disadvantaged neighborhoods only went to others with similar challenges, showed particular areas of concern.

Another impact has been that governments are observed and documented by their citizens like never before. It allowed communities struggling to demonstrate the need for change from city agencies such as the police to comprehensively document the problems and demand something be done about them.

As we become more closely networked, it’s also allowed rapid dissemination of presentations, videos, and other material. This is both a positive, in that it makes engaging across communities easier than ever. And a negative, as it allows rumors and raw data to get out the door without any context or correction.

Nor will this be the end of the data. As more health trackers, smartphones, home security cameras, and personal sensors drop in price and become more widely available, and as more systems we interact with are connected to each other, more documentation of inequity and problems will emerge. For governments, the question becomes how to draw out the patterns needed to achieve real change.

Improving Access

To begin with, access to the data itself needs to be equitable. One of the stark revelations of 2020 was just how little broadband access there actually was even in highly urbanized communities with well-developed infrastructure. New Orleans, for example, found that 60% of underserved communities had no access to broadband whatsoever.

This is a particular issue with data because the raw datasets can run into the terabytes, and often rely on complex cloud computing services to draw out statistical patterns. Without broadband or a functioning device, it’s difficult or impossible for some in the community to keep pace with the information flow. And without that, they’re unable to question the patterns that might be found.

This has created a push for “digital inclusion“, doing the work to provide access to the internet for underserved communities. Some of this is alleviated by private businesses. 5G networks, for example, offer some of the best speeds available.

Ideally, though, getting the infrastructure in place will be a priority. Broadband is increasingly key to education, connection, and employment, and needs to be central to any data equity.

Developing More Effective Technology

Another concern is putting the right tools in the right places. The earlier and more often people are exposed to technology, the more adept they are at putting it to positive use.

While STEM programs in disadvantaged schools are admirable, though, they’re only one part of the cycle. A study by the Brookings Institution also found that after reskilling and early educational training, space needed to be made for both entrepreneurship and capital flows to ensure community benefits.

Outside of businesses, this also means asking where technology needs to be and with who. Over the last two years, the need for remote education drove an enormous outflow of “light” laptops such as Chromebooks and accompanying connection devices. Yet it also raised the question of adults who might need these educational tools as well.

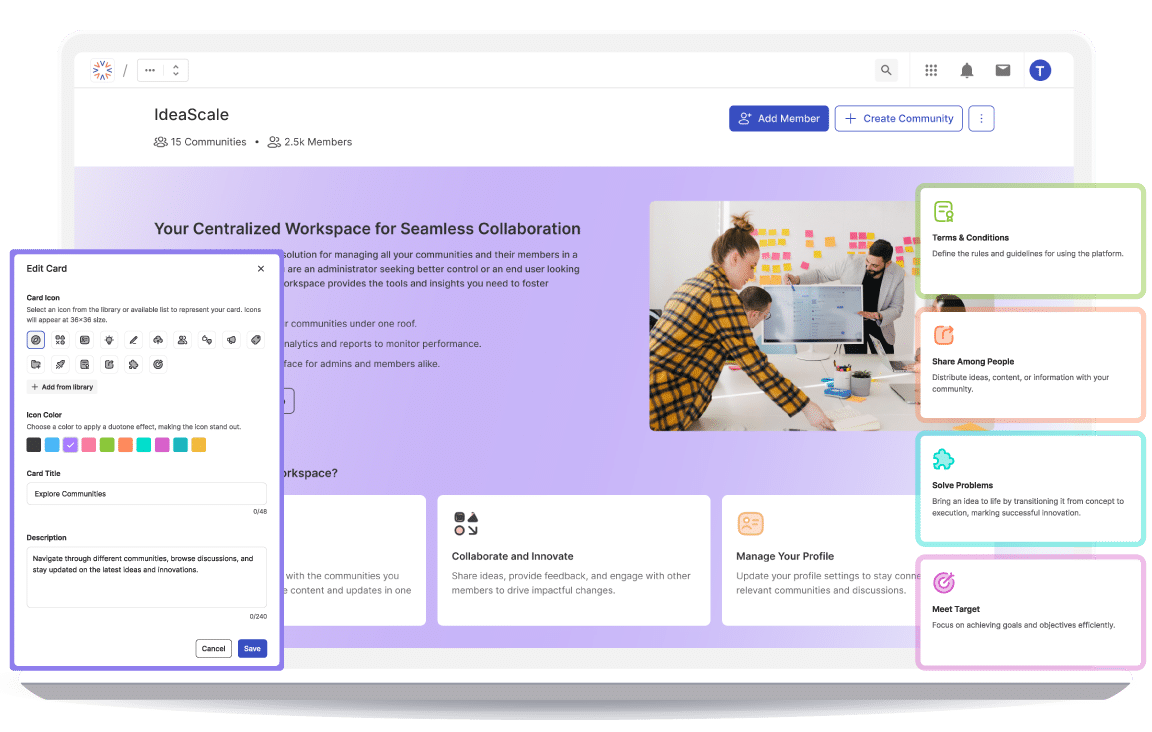

As the infrastructure and tools are in place, there are other approaches that need to be done. One example is dashboards laying out diversity in city employment. Or easily accessible mobile sites that help people find jobs, navigate neighborhoods, or get help from government systems.

Driving Policy

Finally, there’s a fundamental question: What policy do we want these tools and infrastructure to drive?

An example of how a focus can decide policy dates back to the 1960s when nascent computing technology sat at a crossroads. Despite being encouraged to use it to analyze bias and develop better tools for countering it, the Johnson administration instead asked how computing could be used to prevent crime.

That one decision has driven how governments view computing to a surprising degree ever since. Notably, it makes people the “problem” computing is supposed to solve, which brings us back to the beginning and the biased data sets.

Broward County, Florida, attempted to use predictive software to determine which people were most at risk of recidivism. A 2016 ProPublica investigation found the software had a severe racial bias, driven both by how racial groups were seen in the data and how policy fed off that data. It created a vicious spiral where marginalized communities were more likely to be the target of law enforcement, which in turn created data that told the algorithms those communities were more likely to be recidivists.

This doesn’t have to be perpetuated, however. Notice that the algorithm brought the bias in the data into sharp relief. If instead this had been used with hypothetical defendants, it could be a powerful way to examine flaws in the data.

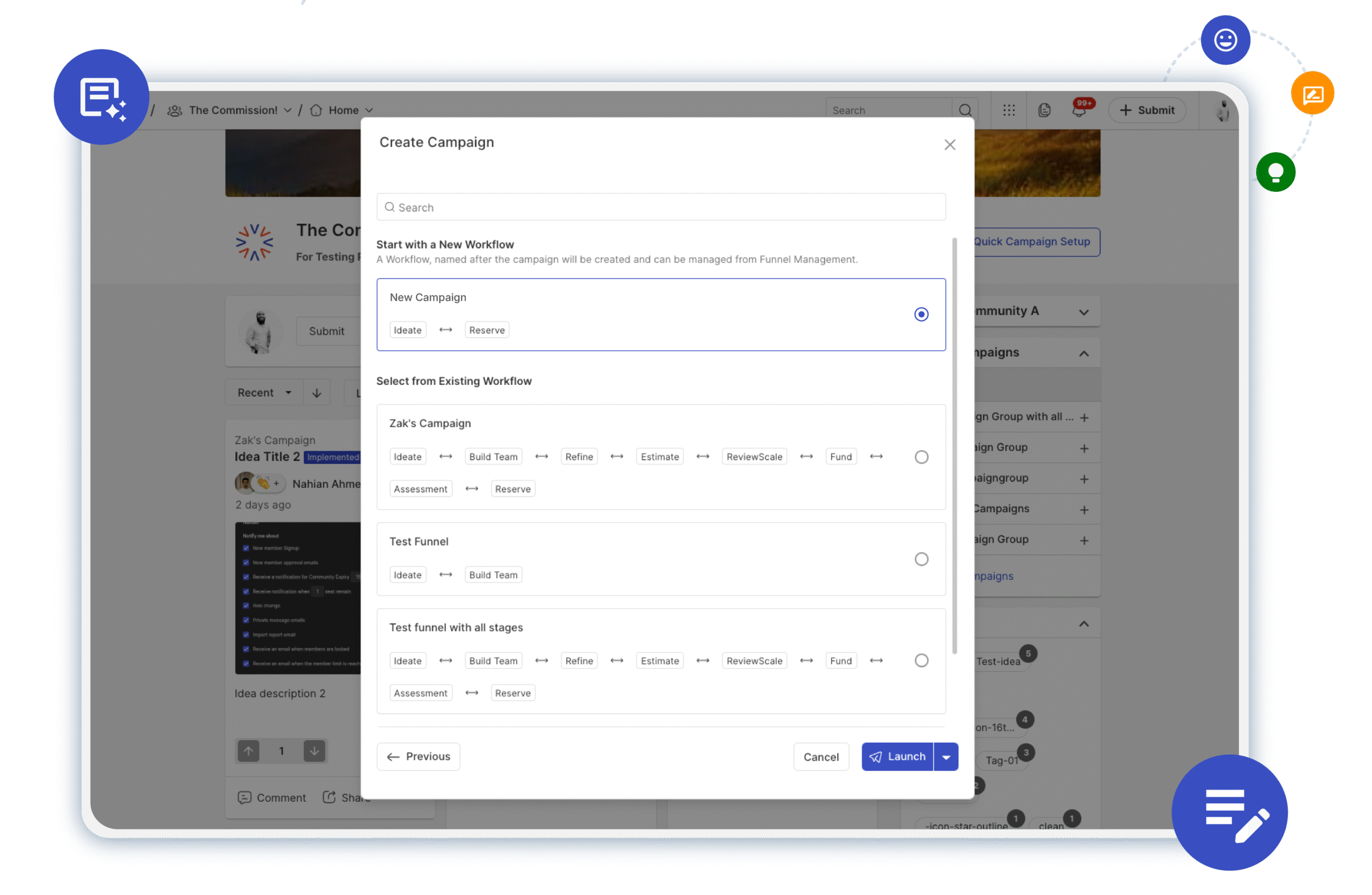

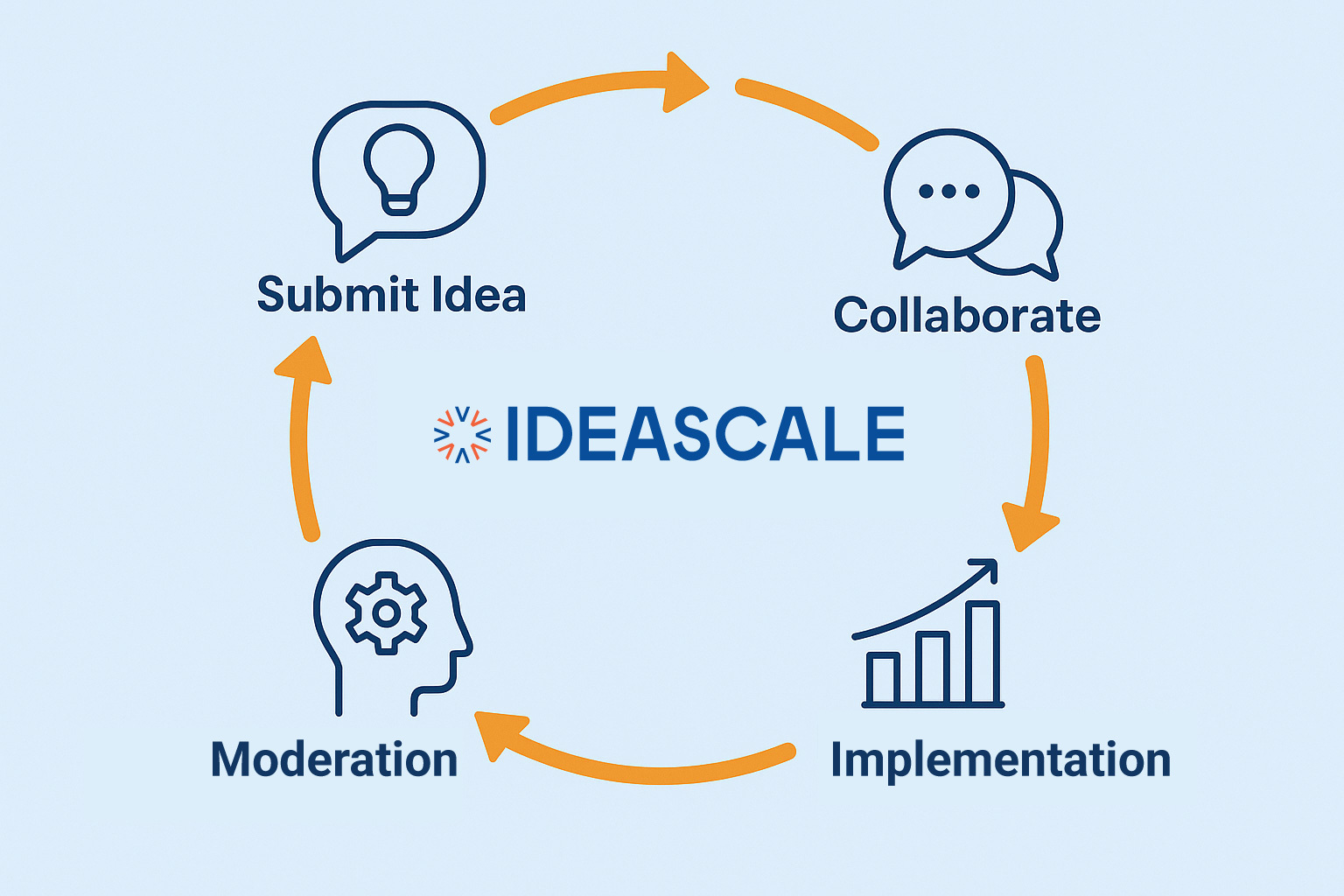

For government innovation, this means starting from first principles and ensuring every stakeholder has a voice. By developing better tools, and bringing communities into the process of creating them, it can ensure a more equitable future. To learn more, request a demo.

Most Recent Posts

Explore the latest innovation insights and trends with our recent blog posts.